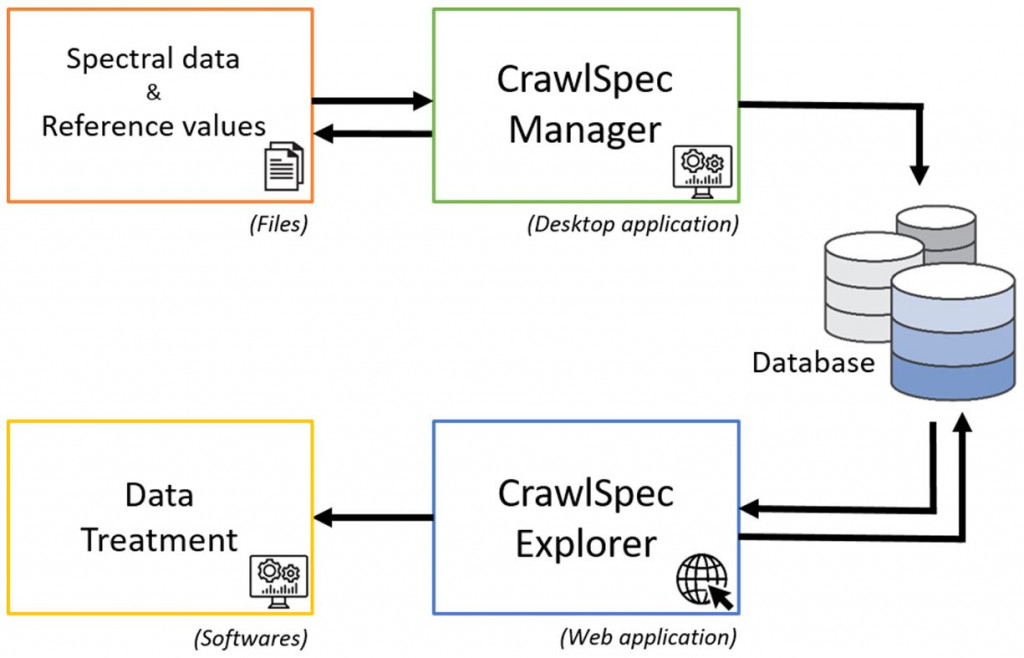

CrawlSpec assembles the tools necessary for the supply and use of the database with a focus on 4 main features: the centralisation, integration, extraction and exploitation of data. The initial step is the introduction of semantics to identify the different items included in the database: data identifiers, product categories, and qualitative and quantitative analytical parameters. Using an MS Excel file structure, the qualitative and quantitative reference data generated by various laboratories and experimental units, both internal and external to the institution, can then be centralised according to the project.

All the spectral data files acquired in the laboratory or on site are stored in proprietary format, in a tree diagram set out according to the type of instrument, product category and project. On this basis, a local computer application (CrawlSpec Manager) integrates the information into a centralised and secure database and confirms its integrity.

Another functionality offers a web application (CrawlSpec Explorer), which is designed to submit queries to the database. By accessing this simple interface, users can extract spectral data and the corresponding metadata. This can be done by following standardised queries relating to a product category, project or batch of samples.

The results are exported in different file formats according to the specifications of the user.

CrawlSpec makes it possible to extend the exploitation of data for the development of calibration and discrimination models. This spectral data management tool will support the agricultural and food sectors in the implementation of Industry 4.0. It will also help share CRA-W expertise in the field of optical sensors and modelling.